记录下3月发现的两个东西(至于为什么5月才发出来你别管.jpg)

new-api nginx配置

起因是在RooCode中使用CLIProxyAPI反代的Codex GPT-5.2 xhigh(没错当时还没有GPT-5.4/5.5),前级还有一个new-api+nginx,发现总是思考到一半就报错重试,导致生成质量下降

看CLIProxyAPI的状态有很多“context canceled”(“stream error”应该就是Codex测返回的问题了),这玩意一半意味着客户端主动取消,检查日志最后发现是new-api测发现客户端关闭了连接

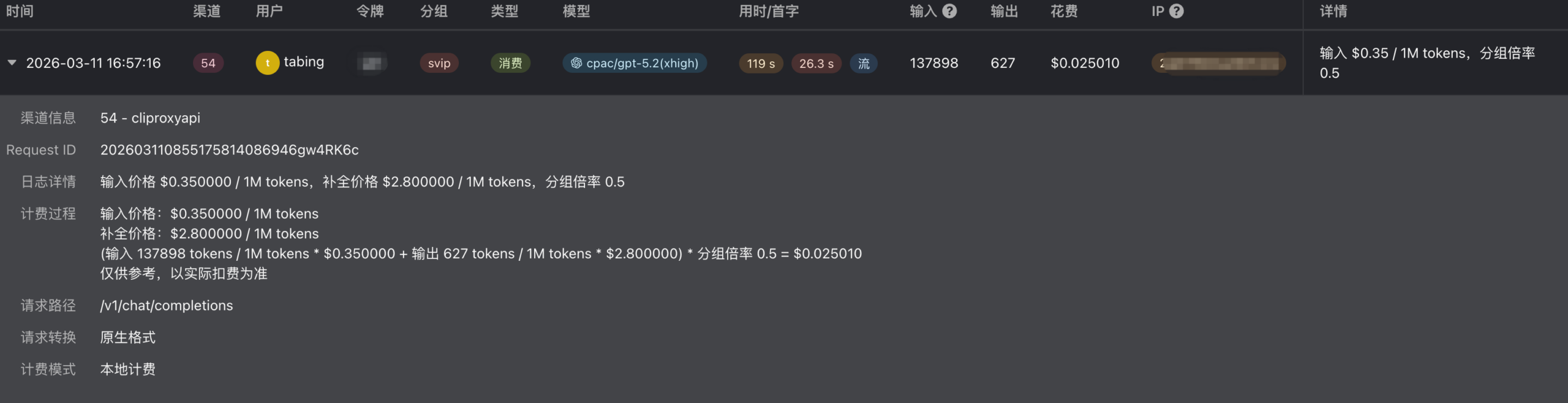

CLIProxyAPI和new-api管理界面截图:

log如下:

new-api | [INFO] 2026/03/11 - 16:57:11 | 202603110855175814086946gw4RK6c | client disconnected

new-api | [ERR] 2026/03/11 - 16:57:16 | 202603110855175814086946gw4RK6c | timeout waiting for goroutines to exit

new-api | [ERR] 2026/03/11 - 16:57:16 | 202603110855175814086946gw4RK6c | scanner error: read tcp 172.18.0.3:53944->172.18.0.10:8317: use of closed network connection

new-api | [INFO] 2026/03/11 - 16:57:16 | 202603110855175814086946gw4RK6c | 预扣费后补扣费:$0.025010(实际消耗:$0.025010,预扣费:$0.000000)

new-api | [INFO] 2026/03/11 - 16:57:16 | 202603110855175814086946gw4RK6c | record consume log: userId=1, params={"channel_id":54,"prompt_tokens":137898,"completion_tokens":627,"model_name":"cpac/gpt-5.2(xhigh)","token_name":"xxx","quota":12505,"content":"","token_id":3,"use_time_seconds":119,"is_stream":true,"group":"svip","other":{"admin_info":{"local_count_tokens":true,"use_channel":["54"]},"billing_source":"wallet","cache_ratio":1,"cache_tokens":0,"completion_ratio":8,"frt":26282,"group_ratio":0.5,"model_price":-1,"model_ratio":0.175,"request_conversion":["OpenAI Compatible"],"request_path":"/v1/chat/completions","user_group_ratio":-1}}

new-api | [GIN] 2026/03/11 - 16:57:16 | relay | 202603110855175814086946gw4RK6c | 200 | 1m59.025445461s | xxx | POST /v1/chat/completions

new-api | [SYS] 2026/03/11 - 16:57:19 | batch update started

new-api | [SYS] 2026/03/11 - 16:57:19 | batch update finished cli-proxy-api | [2026-03-11 16:54:16] [3e4629b7] [debug] [conductor.go:2748] Use OAuth provider=codex auth_file=codex-xxx-free.json for model cpac/gpt-5.2(xhigh)

cli-proxy-api | [2026-03-11 16:54:16] [--------] [debug] [apply.go:146] thinking: config from model suffix | provider=codex model=gpt-5.2(xhigh) mode=level budget=0 level=xhigh

cli-proxy-api | [2026-03-11 16:54:16] [--------] [debug] [apply.go:197] thinking: processed config to apply | provider=codex model=gpt-5.2 mode=level budget=0 level=xhigh

cli-proxy-api | [2026-03-11 16:54:53] [--------] [info ] [gin_logger.go:93] 200 | 78ms | xxx | GET "/v0/management/usage"

cli-proxy-api | [2026-03-11 16:55:17] [9319b13e] [debug] [conductor.go:2748] Use OAuth provider=codex auth_file=codex-xxx-free.json for model cpac/gpt-5.2(xhigh)

cli-proxy-api | [2026-03-11 16:55:18] [--------] [debug] [apply.go:146] thinking: config from model suffix | provider=codex model=gpt-5.2(xhigh) mode=level budget=0 level=xhigh

cli-proxy-api | [2026-03-11 16:55:18] [--------] [debug] [apply.go:197] thinking: processed config to apply | provider=codex model=gpt-5.2 mode=level budget=0 level=xhigh

cli-proxy-api | [2026-03-11 16:55:35] [3e4629b7] [info ] [gin_logger.go:93] 200 | 1m19s | 172.18.0.3 | POST "/v1/chat/completions"

cli-proxy-api | [2026-03-11 16:57:16] [9319b13e] [info ] [gin_logger.go:93] 200 | 1m58s | 172.18.0.3 | POST "/v1/chat/completions"

cli-proxy-api | [2026-03-11 16:57:23] [bc46cb1c] [debug] [conductor.go:2748] Use OAuth provider=codex auth_file=codex-xxx-free.json for model cpac/gpt-5.2(xhigh)

cli-proxy-api | [2026-03-11 16:57:24] [--------] [debug] [apply.go:146] thinking: config from model suffix | provider=codex model=gpt-5.2(xhigh) mode=level budget=0 level=xhigh

cli-proxy-api | [2026-03-11 16:57:24] [--------] [debug] [apply.go:197] thinking: processed config to apply | provider=codex model=gpt-5.2 mode=level budget=0 level=xhigh

cli-proxy-api | [2026-03-11 16:57:26] [bc46cb1c] [info ] [gin_logger.go:93] 200 | 3.054s | 172.18.0.3 | POST "/v1/chat/completions"

cli-proxy-api | [2026-03-11 16:57:28] [6d0aabf8] [debug] [conductor.go:2748] Use OAuth provider=codex auth_file=codex-xxx-free.json for model cpac/gpt-5.2(xhigh)

cli-proxy-api | [2026-03-11 16:57:28] [--------] [debug] [apply.go:146] thinking: config from model suffix | provider=codex model=gpt-5.2(xhigh) mode=level budget=0 level=xhigh

cli-proxy-api | [2026-03-11 16:57:28] [--------] [debug] [apply.go:197] thinking: processed config to apply | provider=codex model=gpt-5.2 mode=level budget=0 level=xhigh后面切换了CLIProxyAPI直连就没有“context cancelled”的问题了,思来想去可能是因为GPT-5.2 xhigh在思考时是以一段一段输出的,如果段之间超过一定秒数(默认60秒)没有返回新的内容,nginx就有可能把这个连接掐掉,导致new-api也随之关闭连接

最后在nginx的location中加上了以下内容修复:

location / {

proxy_pass http://new-api;

client_max_body_size 200m;

proxy_set_header Referer $http_referer;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_set_header Host $http_host;

proxy_buffering off;

proxy_cache off;

proxy_read_timeout 300s;

proxy_send_timeout 300s;

chunked_transfer_encoding on;

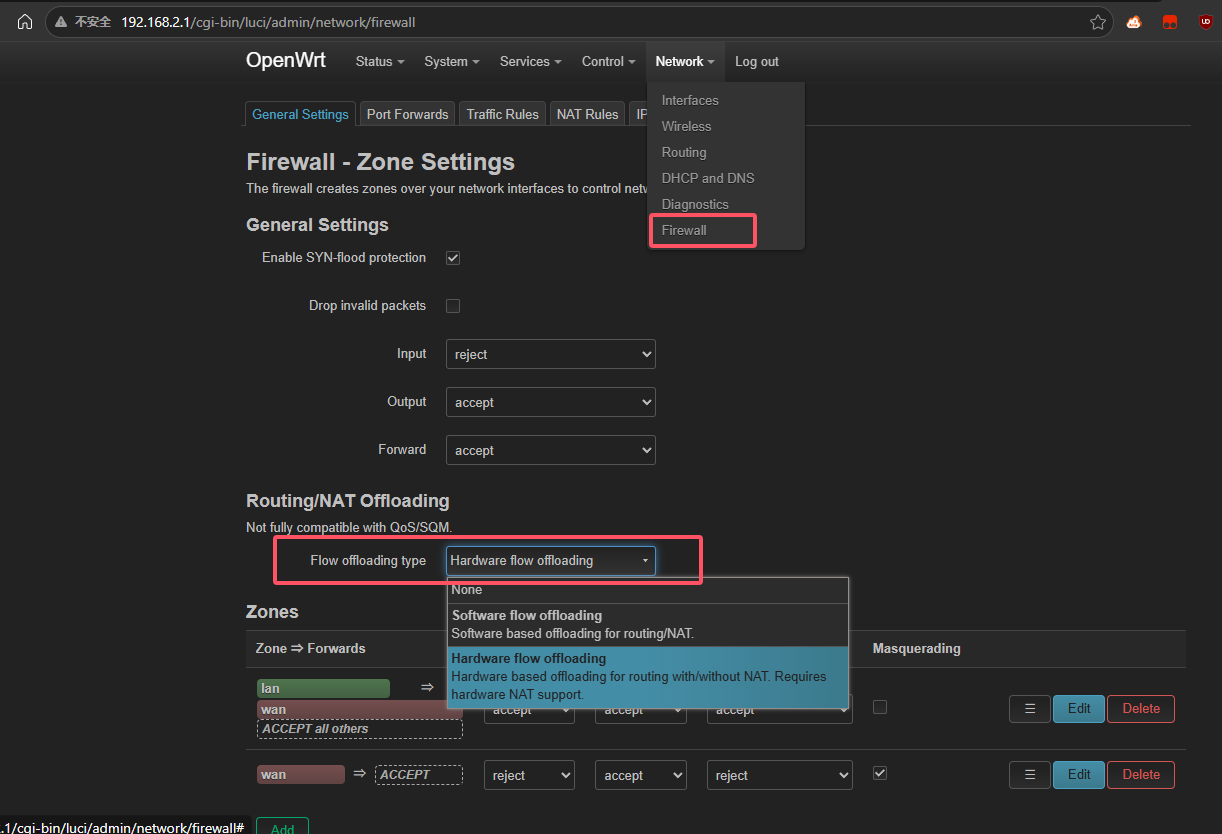

}openwrt 硬件NAT

这个是因为在VR串流时,发现一旦PC有下载,串流就会卡到用不了(网络总流量只有20MiB/s左右,连接数在1000左右,我寻思这也不多啊…)

路由器是R3P(openwrt设备页面),我寻思虽然是N年前的老登了,但也不至于内网1G都跑不满吧…结果在使用时top看了一下,softirq直接占用25%(1个满物理核心)

最后搜索发现新版OpenWRT(好像也不是很新了,但是位置和OpenWRT 17相比也变了位置)的硬件NAT在Firewall下的一个选项里藏着:

修改了之后立即就好了,内网也轻松达到1Gbps,甚至Steam下载都能超过500M了(原来被告知这个B出租屋装的是300M,一直没在意过,结果合着是路由器一直软NAT导致的,真是无敌了)

Views: 4